In philosophy of mind, “mental causation” means mental entities have causal effects, especially physical ones. If physicalism is true, then physical effects are explainable in terms of physical causes (or at least, fundamental physical laws), needing no recourse to causation by anything that is not in fundamental physics. This is the “causal exclusion principle” explicated by Jaegwon Kim (and recently cited in “The Abstraction Fallacy…”), which suggests that, if physicalism is true, then mental entities cannot causally affect anything physical, except insofar as they are already physical entities.

Substance dualists believe in mental causation rather straightforwardly: they believe that the soul has physical effects. Of course, substance dualism contradicts standard physics and physicalism. Type-identity physicalists believe that mental kinds reduce to physical kinds, and that as such, mental causation is a form of physical causation. Mental causation is contrasted with epiphenomenalism, a view under which physical causes can have mental effects but not vice versa.

Epiphenomenalism (e.g. in property dualist form) faces a number of epistemic problems:

- Why did evolution create consciousness if consciousness has no physical effects?

- If our conscious experience has no physical effects, why would our reports about our experience correlate with our experience?

- Why are the physical-mental correlations the way they are, isn’t this unparsimonious?

Mental causation can help answer these questions. Mental causation can explain why minds have evolutionary utility, why mental facts correlate with reports about them, and why a unified explanation of physical and mental entities could be parsimonious.

However, I suggest that mental causation is not essential to addressing these problems, and that intelligible supervenience of the mental on the physical matters more. By “intelligible supervenience”, I mean that it is not mysterious why the physical facts imply the mental ones. For example, the state of a VM in a computer intelligibly supervenes on the hardware state; it is not hard to understand VM states using hardware specifications and operational semantics. Meanwhile, many philosophers of mind believe that the concept “red qualia” does not intelligibly supervene on neurological states, as it’s mysterious how any neurological state could lead to the experienced redness of red.

More specifically, by intelligible supervenience, I mean that higher-level facts can be explained in terms of grounding low-level facts, by unpacking both the high-level and low-level concepts involved. The explanation may require empirical discovery and need not be available a priori. But an intelligible explanation does not involve brute, opaque bridge laws connecting the higher-level facts to the lower-level facts. Once the realization relation is understood, the correlation ceases to appear arbitrary. Chalmers’ “logical supervenience” (a priori conceptual entailment) is somewhat stronger; I mean intelligible supervenience to be a better match for the way in which scientific subject matter supervenes on physics, which involves empirical study, not just conceptual analysis.

I suggest that intelligible supervenience addresses the epistemic problems of epiphenomenalism, and that mental causation fails to address these problems when it does not go along with intelligible supervenience. I will contrast two views, epiphenomenalist functionalism and Russellian monism, to demonstrate the point.

Epiphenomenalist functionalism

Functionalism is the view that existent mental states are functional, psychological states. For example, functionalism says that memory is a cognitive process which stores and retrieves information, including sensory information and the outputs of object recognition processes. This process may or may not be localizable in the brain; memory could be a distributed function realized by multiple brain regions, rather than being in one place.

Functionalists can be physicalists, and can “bite the bullet” on the causal exclusion principle. Perhaps human memories do not have causal effects, because memory is a distributed function, and it is the fundamental entities in the human brain (e.g. particles) that have causal powers, not distributed functionality.

Functionalists need not believe that mental states have causal effects. We can associate a mental state with its physical macrostate (the set of physical microstates compatible with the mental state), but it is not entirely clear how to attribute causal powers to physical macrostates. Maybe causation only exists at the microscopic level, not the macroscopic level. Thus, functionalist physicalists may be epiphenomenalists.

By analogy, imagine a child playing a game of Minecraft. The child believes that entities in the game, such as creepers, are having causal effects, such as blowing up buildings. A physicalist could say that causation is really at the hardware level: fundamental particles have causal effects, which explains how the hardware works, and the hardware’s dynamics explain why the Minecraft software works. This explanation does not need to attribute causal powers to Minecraft entities such as creepers. And the supervenience relationship is intelligible: it is not hard to see how a hardware state would specify the positions of different creepers.

The physicalist’s epiphenomenalist account explains why Minecraft players could develop the belief that creepers have causal effects, even though creepers don’t “really” have causal effects. There are no remaining hard questions like “why does the building blow up if the creeper isn’t causing it to blow up?”.

Analogously, functional psychological states can seem to have causal effects, even if some physicalist views deny them causal powers. We can answer the epistemic questions from before. Evolution produces functional psychology, because functional psychology correlates with fitness-increasing behaviors via brain states. Functional psychology correlates with reports about our consciousness, because functional psychology intelligibly correlates with brain states that make such reports (as brain states without such functional psychology would not tend to cause such reports). And because the supervenience is intelligible, there is not a severe problem of parsimony. If we can explain the physical brain states, there are few or no needed extra assumptions to understand why the brain would have the functional properties it does; it’s primarily a matter of unpacking the functional concepts and noticing the realization match.

Epiphenomenalist functionalism, therefore, may avoid epistemic problems associated with epiphenomenalism, despite in principle denying mental causation. The basic epistemic objections to epiphenomenalism have responses. For prior work on pattern realism and physicalism, see Daniel Dennett’s “Real Patterns” and David Wallace’s “Decoherence and Ontology, or: How I Learned To Stop Worrying And Love FAPP”.

Russellian monism

Russellian monism combines three theses:

- Structuralism about physics

- Realism about quiddities

- Quidditism about consciousness

Bertrand Russell claimed physics is structural, in that it specifies lawful relationships between quantities, but does not specify the intrinsic nature of its fundamental entities:

All that physics gives us is certain equations giving abstract properties of their changes. But as to what it is that changes, and what it changes from and to—as to this, physics is silent.

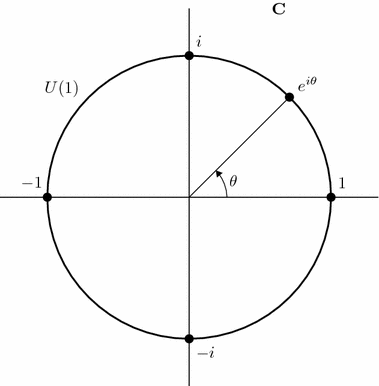

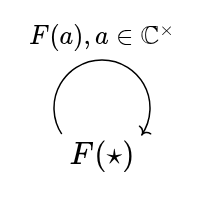

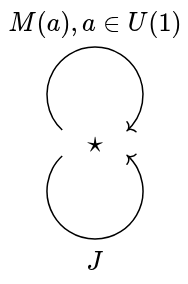

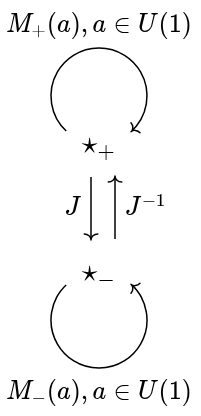

In particular, gauge-invariant quantities (such as mass) are straightforwardly physical, while gauge-dependent ones (such as absolute positions of particles, if they have any) are not. “Quiddities” are posited intrinsic essences of the entities in fundamental physics. They have intrinsic properties that physics does not specify. According to Russellian monism, quiddities are real, and their structure is (or includes as sub-structure) the structures of physics, e.g. quantum field theory may correctly specify a subset of relationships between quiddities.

Russellian monism further posits that these quiddities are relevant to consciousness. In particular, in reductive form, it claims that consciousness does not logically supervene on physics, but does logically supervene on the full reality, which includes quiddities; see Philip Goff’s “Against Constitutive Russellian Monism” for details.

There are panpsychist variants of Russellian monism, which claim that quiddities have experiential qualitative properties (as many philosophers suppose human “red qualia” do), and panprotopsychist variants, which claim that quiddities have some non-experiential properties (perhaps qualitative) which give rise to human experiences, and help explain the phenomenal qualities present in human experience.

As a cartoon version of panpsychist Russellian monism, imagine that a ghost is excellent at mental visualization, and visualizes a great number of small shapes (some colored, others not), which change (perhaps due to the ghost’s intentions, or perhaps “on their own” in awareness) in such a way that they realize the structure of our physical universe, e.g. perhaps they evolve according to cellular automaton rules that underlie physics. One can imagine variants, such as multiple ghosts passing visual shapes to each other telepathically.

Panpsychist Russellian monism endorses mental causation, in that quiddity-level experiences have causal effects on physics. The ghost’s qualia could causally affect the properties of fundamental particles, and higher-level physics. If the ghost’s visualization broke the usual laws of physics, the disturbance could propagate to observable violations of these laws. Thus, panpsychist Russellian monism is not epiphenomenalist.

However, Russellian monism denies intelligible supervenience of the mental on the physical, so mental properties cannot be inferred from physical properties. Imagine a “P-colorblind” human, who is physically identical to a normal human, and yet has no color qualia. Perhaps the ghost visualizes colored shapes when “implementing” the physics of normal humans, yet visualizes grayscale shapes when implementing the physics of P-colorblind humans. Russellian monists would consider this scenario conceivable, for similar reasons as with P-zombies.

This scenario raises epistemic problems, since P-colorblind humans report colored qualia just like normal humans. It is not apparent why human reports of colored qualia would correlate with these humans being made of colored quiddities, how humans could know they are not P-colorblind, how they could know their past selves or other people around them are not P-colorblind, why evolution would not create P-colorblind humans, and so on.

As such, Russellian monism faces similar epistemic problems as epiphenomenalist property dualism does. Even though Russellian monism (at least panpsychist forms) has a place for the mind in fundamental causality, it does not explain why such fundamental mental properties would correlate with human reports about their mental properties. The core reason for this is that it denies intelligible supervenience of the mental on the physical.

For prior work on epistemic problems with Russellian monism and panpsychism, see David Lewis’s “Ramseyan Humility” and Keith Frankish’s “Panpsychism and the Depsychologization of Consciousness”.

Type-identity physicalism

A physicalist view endorsing mental causation is type-identity physicalism. According to this view, mental kinds (e.g. “pain”) are physical kinds (e.g. “C-fiber stimulation”, or some similar neuroscientific kind). If pain is C-fiber stimulation, then pain can have causal powers inherited from C-fiber stimulation. This makes it understandable why people might report pain when their C-fibers fire, as the C-fibers cause downstream mental effects (themselves identical with physical effects).

Type-identity physicalists usually deny logical supervenience of the mental on the physical; they endorse “type B physicalism” and appeal to necessary a posteriori Kripkean identities (though, Kripke himself criticized “pain is C-fiber stimulation” in “Naming and Necessity”). Such denial is not strictly necessary, in that perhaps physical omniscience would allow unpacking the identities; see “How to be a type-C physicalist”. The details of type B physicalism involve rather confusing philosophical dialectic around “2D semantics” and “phenomenal concept strategy”; I’ll omit the details.

Importantly, type-identity physicalism makes significant empirical claims, at least insofar as it claims to ground subjective experience. Since identities like “pain = C-fiber stimulation” would be a posteriori, there is not a strong a priori reason to believe that there is such a grounding for mental concepts in general. Some concepts, like “elan vital”, fall out of favor in science, rather than being identified with anything. What seem to be experienced mental kinds may be realized by a distributed function, perhaps of a rather general neurological learning system; a given neural cluster may assist in multiple object recognitions and/or concepts, blocking straightforward type identities. For an overview, see SEP’s “Multiple Realizability”.

Because of uncertainty about type-identifications or multiple realizability of a specific experienced mental kind, type-identity physicalism has trouble grounding introspective certainty about experience. A given experienced kind may or may not correspond to a natural physical or neurological kind; it’s an open empirical question. The part of “pain = C-fiber stimulation” that could be intelligible is the functional aspect: C-fiber stimulation could intelligibly fill the functional role of pain, e.g. responding to bodily damage and motivating avoidance behaviors. It is much easier to gain introspective confidence about functional role aspects of experience than about neurological implementation details.

Type-identity physicalism partially addresses the problems with epiphenomenalism. Evolution may create pain, because pain is C-fiber stimulation, and C-fiber stimulation implements the functional role of motivating avoidance of bodily damage, which is evolutionarily useful. Reported beliefs such as “I experience pain” may be justified by scientific confidence that a matching physical type will be found, even if it hasn’t been found yet. And pain correlates with C-fiber stimulation because of the identity. However, these answers are only partially explanatory, because of the unintelligible, a posteriori nature of the mind-brain identity.

Structural realism and causation

Let’s revisit the causal exclusion principle. We have a reason to dis-believe in mental causation: physical events have sufficient physical causes, except perhaps in cases of stochastic under-determination, and such stochasticity doesn’t accommodate mental causation either. Hence, perhaps functionalists should bite the bullet on epiphenomenalism. But it is worth considering criticisms of the causal exclusion principle.

Bertrand Russell, in “On the Notion of Cause, with Applications to the Free-Will Problem”, noted:

All philosophers, of every school, imagine that causation is one of the fundamental axioms or postulates of science, yet, oddly enough, in advanced science such as gravitational astronomy, the word “cause” never occurs.

While this is an overstatement, it is by no means obvious how to derive causal claims from fundamental physical theories such as quantum field theory; the theories are more naturally stated as laws over spacetime, laws over a wave-functional configuration space, or in operator-algebraic terms. (See “Quantum common causes and quantum causal models” for existing work in quantum causation.)

Structural realism is a view in philosophy of science, which agrees with Russellian monism’s “structuralism about physics”, denies any epistemic access to non-structural properties, and in “ontic” form, denies non-structural properties altogether. A central structural realist book, “Every Thing Must Go” (ETMG), criticizes Kim’s causal exclusion argument on physical grounds:

Kim’s argument [for causal exclusion], however, depends on non-trivial assumptions about how the physical world is structured. One example is the definition of a ‘micro-based based property’ which involves the bearer ‘being completely decomposable into nonoverlapping proper parts’ (1998, 84). This assumption does much work in Kim’s argument—being used, inter alia, to help provide a criterion for what is physical, and driving parts of his response to the charge, an attempted reductio, that his ‘causal exclusion’ argument against functionalism generalizes to all non-fundamental science.

ETMG, in common with Russell, expresses skepticism about causation in fundamental physics:

Causation, we claim, is, like cohesion, a notional-world concept. It is a useful device, at least for us, for locating some real patterns, and fundamental physics might play a role in explaining why it is.

I find this view of causation in fundamental physics highly plausible. Perhaps “causation” does not function well as a heavyweight metaphysical or fundamental physics concept, but has a useful explanatory role in science and in everyday life. This view of causation would, of course, demote the causal exclusion principle in philosophy of mind, and make it easier to formulate functionalism without epiphenomenalism. However, as I’ve argued, the metaphysics of causation are not load-bearing for epistemology about mental properties; instead, intelligible supervenience does the work.

Conclusion

In explaining how consciousness correlates with reported beliefs about consciousness, it is prima facie desirable to appeal to mental causation: if consciousness is causing the reports, this causation could explain the correlation. But mental causation is hard to reconcile with physicalism and the causal exclusion principle. As such, it makes sense to consider alternative ways that consciousness could correlate with reports. Intelligible supervenience (and its stronger counterpart, logical supervenience) can explain correlations without recourse to heavyweight causal claims. Moreover, causation might not exist in fundamental physics, adding extra incentive to find non-causal explanations of mental-physical correlations. As such, I believe intelligible supervenience provides better explanations of introspective accuracy than does mental causation.

A residual worry is that there are remaining qualitative, subjective facts that cannot be characterized functionally or structurally. On an exhaustively structuralist or materialist account of physics, such qualities cannot be characterized physically either. Features attributed to qualia (subjectivity, privacy, ineffability) undermine any attempt to fit them into a physicalist and/or functionalist theory of mind. This poor fit is not just a metaphysical problem, but also an epistemic problem; it makes it hard to explain why beliefs about qualia would correlate with the qualia themselves. For criticisms of qualia realism, see Daniel Dennett’s “Quining Qualia” and Keith Frankish’s “Consciousness is not what it seems”; perhaps it is more correct to debunk qualia (explaining why people would believe in them, even if they are not real) than to ground them in physics.

While there is ongoing philosophical debate in the metaphysics of causation, I have argued that it is mostly irrelevant to the epistemology of mental properties, because epistemology is much more dependent on correlations (especially intelligible ones) than on causation. Causation can explain correlations and lawful constraints, but the correlations and laws ground the base of epistemology: how judgments correlate with reality. As such, epistemic arguments against epiphenomenalism in philosophy of mind are more properly formulated as epistemic arguments against views lacking intelligible mental/physical correlations, especially intelligible supervenience.